If your CEO asked for your confidence level on next month’s production forecast, would you give a number or a range?

Let’s start your first data-driven planning!

Be honest. When you commit to a schedule in today’s shipbuilding 4.0 environment, when you allocate resources using predictive project management approaches, when you promise a delivery date—are those commitments based on verifiable data, or are they based on a combination of gut feeling, past experience, and the “expert judgment” of your team leads? Modern shipyard performance metrics demand more than intuition.

For even the most seasoned Project Managers and Engineers in shipbuilding 4.0 environments, this question hits a nerve. You are masters of your craft, yet you operate in a fog of uncertainty without proper shipyard performance metrics.

You are constantly forced to make high-stakes decisions through predictive project management approaches without being able to answer the most fundamental questions with certainty:

- “How long will this phase really take?”

- “Where is our actual biggest bottleneck right now?”

- “How many teams do we truly need for the next stage?”

Effective construction data analysis could provide these answers.

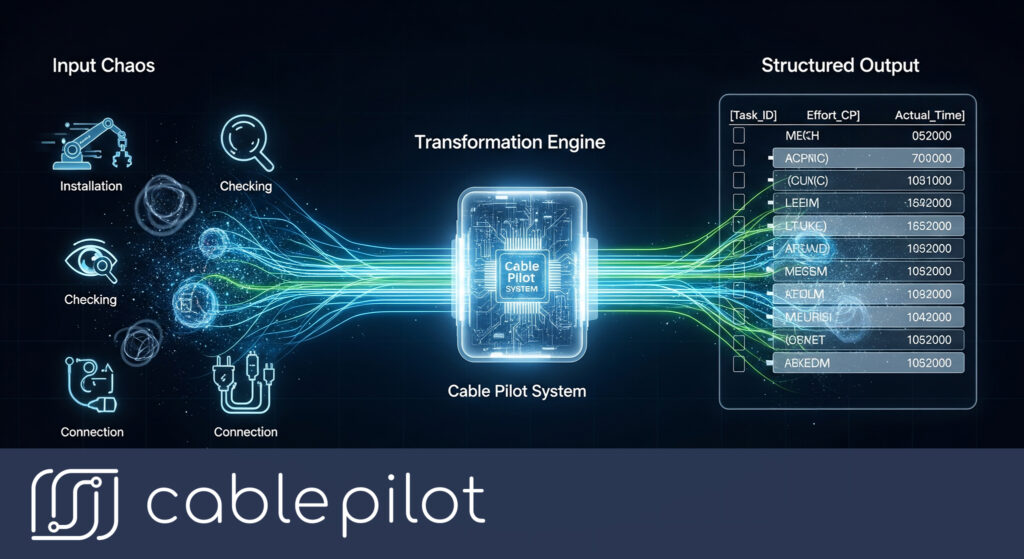

This isn’t a failure of your experience or your team’s dedication. It’s a failure of data. The reason our plans are so often wrong is that they are built on a foundation of data chaos. In this article, we will argue that the leap to truly effective, data-driven planning is not about adopting complex algorithms. It’s about first winning the war against bad data. We’ll show you how to build a foundation of clean, structured information and how that foundation allows you to transform planning from a dark art into a precise science, making your approach truly data-driven planning.

The Foundation of Failure: Why You Can’t Analyze Chaos

The dream of advanced shipbuilding analytics is alluring: predictive models that forecast delays, algorithms that optimize resource allocation through shipbuilding 4.0 technologies. But there’s a hard truth: you cannot apply sophisticated construction data analysis to chaotic data. It’s the oldest rule in computing: Garbage In, Garbage Out (GIGO).

Before you can even think about advanced predictive project management, you must solve the foundational problem that plagues nearly every shipyard: the poor quality of your source data. Modern shipyard performance metrics require clean, structured information first.

Most shipyards are drowning in data, but starving for insights. The data that does exist is crippled by three systemic flaws that make any meaningful analysis impossible.

- Flaw #1: It’s Subjective. Your current data is based on human opinion, not objective fact. A supervisor reports a task is “80% complete.” An engineer describes a work package as “highly complex.” What do these terms mean? They are unquantifiable feelings. You cannot build a reliable forecast on a foundation of feelings. They are useless for any real construction data analysis.

- Flaw #2: It’s Fragmented. Your data lives in a hundred different places. The master cable list is in one Excel file, the procurement data is in an ERP system, the quality reports are in a separate database, and daily progress updates are buried in email chains and text messages. These information silos make it impossible to get a single, holistic view of the project. You can’t analyze the relationship between procurement delays and installation slowdowns if the data for each lives in a different universe.

- Flaw #3: It’s Latent. The data you analyze is almost always a report on the past. By the time you receive a weekly summary, the information is already 48-72 hours old. You are analyzing history. In the dynamic environment of a shipyard, this is like trying to make a decision in a high-speed chase by looking at a photograph of the street from a minute ago. Meaningful analysis requires real-time data that reflects the project’s current state, not its historical one.

This combination of subjective, fragmented, and latent information creates a toxic data environment where real analysis is impossible. Any ‘forecast’ built on this foundation isn’t a forecast at all; it’s just organized guessing. The first, most critical step in any data-driven planning initiative is to fix the data at its source.

Building a Decision-Grade Data Foundation

To transform your data from a chaotic liability into a strategic asset, you must systematically capture three types of objective information. These are the pillars of any robust analytics program.

Pillar 1: Objective Workload (The “What”)

First, you must replace subjective estimates of “effort” or “complexity” with a standardized, objective metric for quantifying the volume of work. You need a unit of measurement that is consistent across all tasks, teams, and projects.

- The Old Way: “This is a hard task.” (A subjective opinion)

- The New Way: “This task has a calculated workload of 500 Cable Points.” (An objective fact)

A metric like Cable Points (CP) is calculated by the system based on the physical, objective attributes of the work—cable size, number of cores, installation method, termination types, etc. It provides a universal language for measuring scope. A task of 500 CP is always the same amount of work, regardless of who performs it. This gives you a solid baseline for every analysis you want to run.

Pillar 2: Precise Time (The “When”)

Next, you must replace vague timelines with precise, automated timestamps for every event. Every time a component changes its state, that event must be logged.

- The Old Way: “We finished that section sometime last week.” (A vague memory)

- The New Way: “The status of Cable C-1138 was changed from ‘Installed’ to ‘Terminated’ by User #54 on June 28th at 14:32.” (An immutable, timestamped record)

A modern platform automatically creates these status logs. When an installer scans a QR code and updates a task, the system doesn’t just change the status; it creates a permanent, auditable record of the event. This gives you a rich, granular dataset of how long each step in the process actually takes.

Pillar 3: Complete Context (The “Where”)

Finally, you must ensure every piece of work is tied to its full context. A task doesn’t exist in a vacuum; it exists in a specific physical location and is part of a larger system and discipline.

- The Old Way: A flat list of tasks in a spreadsheet.

- The New Way: A data model where every task is nested within a physical hierarchy (Vessel → Area → Deck → Location) and tagged with its associated System (e.g., “Fire Detection”) and Discipline (e.g., “Electrical”).

This contextual data is critical. It allows you to analyze performance not just at the project level, but at a highly granular level, helping you pinpoint exactly where your problems and opportunities lie.

When you have clean, structured data built on these three pillars, you finally have a foundation solid enough to support real analysis. You have the raw material for insight.

From Data to Decisions: Asking Smarter Questions

With a foundation of decision-grade data, you can finally move beyond guesswork and start answering critical management questions with a high degree of confidence. The quality of your answers is directly related to the quality of your data.

Question 1: “How many teams do we need for the next phase of electrical work?”

- The Answer Without Data: You ask your most experienced supervisor. He thinks back to a similar project from five years ago and says, “Let’s go with three teams. That feels about right.” This is planning by anecdote.

- The Answer With Data: You query the system.

- “What is the total calculated workload (in CP) for all ‘Not Started’ electrical tasks in the Forward Zone?” The system answers: 60,000 CP.

- “What was the average monthly productivity (in CP per team) for electrical work on our last project?” The system analyzes the historical data and answers: 20,000 CP per team, per month.

- The Decision: The calculation is simple: 60,000 CP divided by 20,000 CP/team = 3 teams. You need exactly three teams for one month. Your decision is not a guess; it’s a data-driven conclusion. You can now allocate your resources with precision, avoiding both under-staffing (which leads to delays) and over-staffing (which destroys your margin).

- “What is the total calculated workload (in CP) for all ‘Not Started’ electrical tasks in the Forward Zone?” The system answers: 60,000 CP.

Question 2: “We’re falling behind schedule on Deck 3. Why?”

- The Answer Without Data: You hold a meeting that devolves into a session of circular finger-pointing. You leave an hour later with no clear answer, only lower morale and a new meeting scheduled for tomorrow.

- The Answer With Data: You open your project dashboard and run a blocker analysis.

- You filter for all tasks on Deck 3 that are currently “Blocked.”

- You group the blockers by their root cause type.

- The data instantly visualizes the problem: 70% of all blockers on Deck 3 are of the type “Missing Materials”

- The Decision: The problem is not your people; it is a specific, identifiable bottleneck in your supply chain for materials to that exact area. Instead of hosting a grievance session, you can make a targeted intervention, calling the specific supplier or re-allocating materials from another part of the project. You are solving the real problem, not just managing the symptoms.

This is the essence of shipbuilding analytics. It’s about transforming vague, high-level problems into specific, actionable, data-driven insights.

Conclusion: Analytics Is a Discipline, Not a Dark Art

In the past, planning was an art form, mastered by a few seasoned veterans who had “seen it all.” In the era of Shipbuilding 4.0, this is no longer a viable or competitive model. The complexity of modern vessels has outstripped the capacity of human intuition.

True data-driven planning is not about buying a piece of “shipbuilding analytics software” and hoping it will magically provide answers. Successful predictive project management requires committing to the discipline of collecting clean, structured data first through effective shipyard performance metrics systems. The insights from construction data analysis are a natural result of that discipline, enabling shipbuilding 4.0 transformation across your entire operation.

By building a foundation on objective workload, precise time, and complete context, you create an environment where data becomes your most powerful strategic weapon. This transforms planning from a constant source of anxiety into your most powerful competitive advantage, allowing you to deliver projects with a level of predictability and profitability your competitors can only dream of.

Ready to lay the foundation for a truly data-driven shipyard? Subscribe to our newsletter to get expert insights on building a high-performance data culture!

Pingback: Reality Check: Flaws In Plan-Fact Analysis In Shipbuilding

Pingback: Enhance Shipbuilding Efficiency With Real-Time Management

Pingback: Empowered Data-Driven Project Management For Shipbuilding 4.0